Code audit for AI-generated apps: findings and a 72h plan

A code audit for AI-generated apps shows hidden bugs, security gaps, and scaling risks. Learn a 24-72 hour fix plan with clear acceptance checks.

What a code audit is (and why AI-generated apps need one)

A code audit is a careful review of your app’s code and setup to find what’s wrong, what’s risky, and what’s likely to break when real users show up. Think of it like an inspection report. It doesn’t just say “this is bad.” It points to the exact spots, explains the impact, and tells you what to fix first.

AI-built prototypes often look finished, but fail in production because they were generated to “work once” on a happy path. Small gaps add up fast: missing error handling, inconsistent data rules, fragile auth flows, or secrets pasted into the repo. A demo that works on your laptop can fall apart when you add real traffic, payments, or multiple user roles.

A good audit for AI-generated apps also checks the parts that are easy to ignore until they hurt: security basics, dependency risks, database access patterns, and whether the app can actually be deployed and maintained.

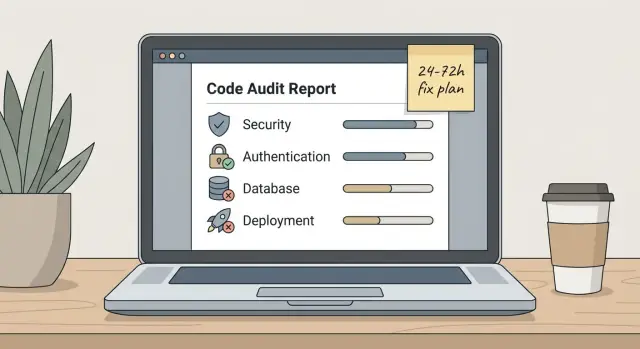

At the end of an audit, you should get:

- A clear list of issues, grouped by security, reliability, and maintainability

- A priority order (what must be fixed now vs what can wait)

- A simple remediation plan (often mapped to 24-72 hours)

- Acceptance checks for each fix (how you confirm it’s truly done)

Set expectations early: an audit is the diagnosis. Fixes are the treatment. Blending them sounds efficient, but it often creates rework because you start patching symptoms before you understand root causes.

Example: a founder brings a login flow built with Bolt or Cursor that looks “working.” The audit finds exposed API keys, auth that can be bypassed, and server routes that trust user input. That’s not a “polish later” issue. It’s a stop-ship issue.

At FixMyMess, we start with an audit so the next 48-72 hours of work stays focused, testable, and predictable.

What the audit actually checks

A code audit for an AI-generated app isn’t about style preferences. It’s about one question: will this app behave safely and predictably when real users hit it?

Security basics

First, we look for obvious ways the app can be broken into. That includes exposed secrets (API keys in the repo, client-side env vars, logs), weak authentication (skipped checks, “temporary” bypasses left in place), and missing authorization (any logged-in user can access admin routes or other people’s data).

We also check input validation end to end. AI-written code often validates on the front end but forgets the server, which is where attacks happen. Permissions, file uploads, and third-party webhooks get extra attention because one mistake can turn into a data leak.

Reliability, data risks, and deploy readiness

Next is reliability: where does the app crash, hang, or silently fail. We trace the main user flows (sign up, login, create, pay, invite, export) and look for missing error handling, timeouts, and “happy path only” logic. Flaky flows often come from race conditions, partial state updates, or assumptions like “this API always returns X.”

Then we review data handling. Common findings include broken migrations, queries that fail under real data, and unsafe SQL patterns (string-built queries that invite SQL injection). We also check that backups, rollbacks, and data constraints are realistic for production.

Finally, we assess maintainability and deployment readiness. If the architecture is spaghetti, logic is duplicated, or files mix UI, business rules, and database calls in one place, fixes become slow and risky. On the deploy side, we confirm env vars are wired correctly, build steps are repeatable, configs are consistent across environments, and nothing depends on a developer’s local machine.

A strong audit ends with a short list of concrete issues, each tied to user impact and a clear “done” condition, so remediation stays predictable.

Example findings you see a lot in AI-generated apps

AI-generated apps often show the same patterns even when the UI looks polished. The demo works, but it breaks under real users, real data, and real traffic.

Common findings (and what they look like when you test):

- Broken authentication and session handling. People get logged out randomly, sessions never expire, or the app trusts a client-side flag like

isLoggedIn=true. A quick check: open two browsers and see if the session behaves consistently, and whether logout actually invalidates tokens. - Exposed secrets in the repo or logs. API keys, database URLs, and service tokens show up in config files, sample

.envfiles committed by accident, or server logs. This is one of the fastest ways an app gets compromised, especially if the key has broad permissions. - SQL injection and unsafe query building. You see string concatenation like

"... WHERE email='" + email + "'"or raw filters passed straight into queries. Even if the app uses an ORM, AI-generated code often mixes safe and unsafe patterns. - Authorization gaps (cross-user data access). The app checks that a user is logged in, but not that they’re allowed to access a specific record. A common symptom: changing an ID in the URL or request body shows someone else’s invoices, projects, or profile.

- Unscalable patterns that feel fine until they don’t. Heavy queries without indexes, endpoints that return all rows (no pagination), and tight coupling where one screen triggers many server calls. It passes with 20 records, then times out at 2,000.

A small real-world example: a founder brings a prototype built in Bolt or Replit that “works” for one test account. In a FixMyMess audit, we often find login is fine, but user-to-data checks are missing. That turns a simple feature into a privacy incident waiting to happen.

These issues are fixable, but you need proof they’re fixed: a reproducible test, a minimal patch, and an acceptance check you can run again tomorrow.

How to prioritize: severity, effort, and dependencies

Audits often produce a long list of issues. The fastest way to make that list useful is to sort each item by three things: how bad it is, how long it takes, and what has to be fixed first.

Group findings by impact. Most problems fall into four buckets: security holes, data loss risks, downtime risks, and plain UX bugs. This keeps you from spending day one polishing a screen while a leaked secret key is still sitting in the repo.

Severity: keep the labels simple

Use four labels and be strict about what counts:

- Critical: active security risk, unauthorized access, money loss, or an outage waiting to happen

- High: could become critical with normal use (wrong permissions, broken auth flows, weak input validation)

- Medium: hurts reliability or correctness but has a workaround (slow queries, flaky jobs)

- Low: cosmetic or minor UX issues (copy, spacing, non-blocking edge cases)

A concrete example: if login sometimes fails, that’s often High. If any user can access another user’s data by changing an ID in the URL, that’s Critical.

Effort: size in hours, not weeks

For fast remediation, estimate in hours. A simple rule works well: 1-2h (small), 3-6h (medium), 7-12h (large). If something feels bigger than a day, split it into smaller fixes with clear outcomes.

Dependencies matter as much as severity. Broken authentication blocks permission fixes. Database schema problems block API fixes. Deployment issues block everything that needs testing in a real environment.

A practical order:

- Fix Critical items first, especially security and data loss risks

- Fix anything that unblocks other work (auth, env config, build/deploy)

- Take the best “high impact, low hours” wins

- Postpone Low items unless they touch trust (payment, login, user data)

Teams like FixMyMess often turn this into a short queue (5-10 items) for the next 24-72 hours, each with an owner, an hour estimate, and a clear “done” check.

Turn findings into a 24-72 hour remediation plan (step by step)

A good audit can produce a lot of findings. You get value when you turn that list into a short release plan with one clear goal: “What do we need to ship safely by Friday?” With AI-generated apps, problems often stack on top of each other, so sequencing matters.

Day 0 (1-3 hours): lock the target

Freeze scope. Pick one release target (for example: “users can sign up, pay, and see their dashboard”) and pause new features until it ships. Capture the exact environment you’ll release from (branch, DB, hosting, API keys) so you’re not chasing moving parts.

Then build a plan that fits 24-72 hours:

- Stop the bleeding (0-6 hours): rotate any exposed secrets, remove keys from the repo, lock down admin routes, and limit production access to a short list of people

- Restore critical user flows (6-24 hours): fix the top 1-3 journeys (sign in, checkout, create the core object) and make them testable end to end

- Stabilize the data layer (24-36 hours): fix broken migrations, missing indexes, unsafe queries, and weak input validation that causes crashes or bad data

- Refactor only the worst hotspots (36-60 hours): target the small areas causing most bugs (a single giant file, duplicate auth checks, tangled state). Don’t rewrite the whole app

- Get ready to deploy (60-72 hours): tighten config, add basic monitoring, and do a clean release

Days 1-3: focus on dependencies, not perfection

Order work by what blocks everything else. If auth is broken, it blocks testing checkout and dashboard. If the database schema is unstable, you’ll keep re-breaking features during fixes.

For deployment readiness, keep it minimum-viable:

- Error tracking with alerts for spikes

- Health check endpoint and uptime monitoring

- A rollback plan (one-click or documented manual rollback)

- Environment variables audited (no defaults for secrets)

- A short smoke test script you can run after deploy

Many teams keep this plan on a single page: goal, timeline, owner per step, and “done” checks for each item.

Acceptance checks: how you know each fix is done

Fast fixes fail when nobody agrees on what “done” means. Acceptance checks turn a vague task like “fix auth” into a simple statement you can verify in minutes.

For every finding, write 2-5 checks in plain language. Each check should be something you can prove by running the app, looking at logs, or reviewing a specific file or setting. If you can’t verify it, it’s not a check.

Security checks (must-pass)

Security work is only real when secrets and access rules are confirmed, not assumed.

- No secrets committed: API keys and private tokens are removed from code, and git history is reviewed for obvious leaks

- Least privilege: the app uses a limited service account and only the permissions it needs (no admin-by-default)

- Input is safe: key endpoints reject suspicious input (basic SQL injection and script payload attempts fail)

- Auth boundaries hold: private pages and APIs return “not authorized” when a user is logged out or uses a different account

Functional, data, regression, and deployment checks

Tie checks to the core user journey. Pick one happy path plus 2 unhappy paths (wrong password, expired session, missing required field).

- Core flow works end to end: a new user can sign up, sign in, and complete the main action (for example, create a project, save it, and see it on reload)

- Data is correct: creates/updates/deletes persist, and records don’t bleed between users

- No unauthorized data access: user A can’t view or edit user B’s data even if they guess an ID

- Regression check: 3-5 key screens still load and function after changes (no blank screens, no console spam)

- Deployment readiness: the build succeeds, required env vars are set, and a basic smoke test passes after deploy (log in, hit one key API, save one record)

Example: if an audit finds “broken authentication + exposed secrets,” the acceptance checks aren’t “auth improved.” They’re “login works,” “private routes block guests,” “secrets are removed,” and “production env vars are configured and verified in a smoke test.”

Example scenario: from broken prototype to ship-ready in 72 hours

A solo founder built a simple SaaS prototype with an AI coding tool: users sign up, connect a payment method, and generate PDF reports. It looks fine in demos, but real users hit errors. Signups sometimes fail, the app logs people out, and a tester finds that changing a URL lets them see another user’s report.

A realistic audit summary might look like this:

- Broken auth flow: refresh tokens are stored in localStorage and sessions randomly invalidate

- Exposed secrets: a third-party API key is hard-coded in the frontend build output

- Access control bug: report endpoints don’t verify ownership (IDOR risk)

- SQL injection risk: raw string queries are built from request parameters

- Payment webhooks: signature verification is missing, so events can be spoofed

- Error handling: silent failures and generic 500s with no useful logs

- Architecture: duplicated business logic in routes and UI, making fixes fragile

A simple 72-hour remediation plan

First 4 hours (stop the bleeding): confirm the most dangerous paths and lock them down.

- Remove exposed secrets from client code and rotate keys

- Add ownership checks on any “get report” or “download” endpoint

- Turn on basic request logging and capture a short list of top failing routes

Acceptance checks (4h): no secrets appear in the client bundle; one user can’t access another user’s report even with a guessed ID; logs show who called what and when (without storing passwords or full tokens).

By 24 hours (make core flows reliable): stabilize auth and close common injection paths.

- Move tokens to httpOnly cookies (or fix the session strategy) and add consistent auth middleware

- Replace raw SQL strings with parameterized queries

- Add webhook signature verification for payments

- Add clear error messages for user-facing failures and safe server-side logs for debugging

Acceptance checks (24h): sign up/login works 20 times in a row without random logouts; basic SQL injection tests fail safely; webhook requests without a valid signature are rejected.

By 72 hours (ship-ready and calmer to operate): refactor the risky parts and prep deployment.

- Pull duplicated logic into a small service layer so fixes happen in one place

- Add rate limiting on auth endpoints and tighten CORS

- Add health checks and minimal monitoring for key endpoints

- Run a short regression pass on the top 10 user actions

Acceptance checks (72h): core flows (signup, payment, report generation, download) pass a checklist; the app returns sane errors (no stack traces to users); deploy is repeatable and documented.

What’s safe to ship vs backlog

Safe to ship after 72 hours: signups and logins are stable, user data is properly isolated, payments can’t be spoofed, and the app has enough logging to support real users.

Backlog items (not blockers if risk is low): nicer UI validation, deeper performance work, long-term test coverage, and broader refactors. This is the kind of fast, focused path teams use when they bring a broken AI-generated prototype to FixMyMess for an audit and a 48-72 hour remediation push.

Common mistakes when fixing fast

Speed is great, but fast fixes can turn into long nights if you pick the wrong fights. Audits usually surface a few hard blockers that stop the app from being safe or stable. The biggest mistake is ignoring those blockers and starting a full rewrite because the code feels messy.

A rewrite sounds clean, but it usually blows up your 24-72 hour window. You lose working pieces, create new bugs, and still have to solve the same basics (auth, data rules, deployments). Fix the broken paths first, then refactor what you touched.

Another trap is polishing the UI while serious holes are still open. If secrets are exposed, inputs aren’t validated, or your database queries are unsafe, a nicer button doesn’t help. Security has to come before cosmetics, even when the demo is tomorrow.

Sign-off is where rushed projects often fail. If nobody owns acceptance checks, fixes stay “almost done” and arguments start: “It works for me” vs “It broke in staging.” Pick one person who can say yes or no for each fix, and keep the checks simple and testable.

Changing too much at once is also risky. Big commits make it hard to see what caused a bug, and hard to roll back safely. Even in a hurry, you want small, focused changes and a clear rollback plan (for example, the last known good commit and a way to disable new behavior).

One security-specific mistake shows up constantly: treating authentication and authorization as the same thing. Logging in isn’t the same as having permission. It’s easy to “fix” login and still let any logged-in user access other people’s data.

Fast-fix traps to watch for:

- Rewriting major screens instead of fixing the few blockers the audit found

- Fixing layout and copy while security issues are still open

- Shipping a fix without an owner who can run and sign off the acceptance checks

- Merging large changes without a rollback plan

- Verifying login works, but not verifying access rules per role and per resource

If you’re working with a service like FixMyMess, ask for a plan that keeps changes small, prioritizes safety, and ends each task with a clear pass/fail check.

Quick pre-ship checklist (10 minutes)

Use this right before you ship. It’s not a full review. It’s a fast safety check for the most common ways AI-generated apps break in production.

1) Secrets and keys

Start with the one mistake that can turn into an incident.

- Confirm there are no secrets committed in the repo, build logs, or client-side code

- Rotate any keys that were ever pasted into prompts, chat logs, or

.envfiles shared with others - Check that production uses its own credentials (not your local dev ones)

2) Auth and access rules

Do one real end-to-end pass, not just “it loads.” Make sure the rules match your product, not whatever the generator guessed.

Create a fresh account, then verify the basics: sign up, sign in, sign out, password reset (if you have it). Then try one action a user should not be allowed to do (for example, open another user’s record by changing an ID in the URL). It should fail with a clear message.

3) Inputs and database safety

AI apps often work in the happy path but fall apart with odd input.

Manually test a few messy values: long text, empty fields, special characters, and unexpected file types. If your app builds queries, confirm it uses parameterized queries and doesn’t build SQL with string concatenation. If you’re not sure, treat it as unsafe until proven otherwise.

4) Smoke test the core flow

Pick one primary flow and run it like a real user.

Example: a new user signs up, completes onboarding, creates the main object (project/order/ticket), edits it, and sees it after refresh. Do it once in an incognito window to catch missing config and caching issues.

5) Errors, logs, and blank screens

Force one error on purpose (like submitting a required field blank) and watch what happens.

You want a useful message for the user, and a useful log for you. You don’t want blank screens, infinite spinners, or silent failures that only show up as “something went wrong.”

6) Repeatable deploy and documented config

If you had to redeploy right now, could someone else do it?

Check that environment variables are listed, named clearly, and match what production actually uses. Then do a clean build and deploy from scratch (or a staging deploy) to confirm it’s repeatable.

If you’re working with FixMyMess, this checklist maps closely to what we verify after a free audit: the app should be safe, the core flow should work from a fresh account, and deploy should be predictable.

Next steps: getting an audit and moving to fixes

Progress starts when someone can see the whole picture. An audit works best when the reviewer can run the app, reproduce the main problems, and confirm what “done” means for you.

Before you ask for help, gather a few basics. You don’t need to be technical, but you do need to be organized:

- Repo access (or a zip) plus any related services (DB, auth provider, storage)

- A list of environments: local, staging, production, and who can access each

- Where secrets live today (env vars, hosting dashboard, hardcoded files)

- Your top 3 goals (example: “users can sign up,” “payments work,” “deploys are reliable”)

- A short bug list with steps to reproduce and screenshots if relevant

Sometimes patching is the right move. Other times a rebuild is simply faster. Rebuilds tend to win when the app has tangled structure across many files, repeated copy-paste logic, and no clear separation between frontend, backend, and data access. Patching tends to win when the core design is sound, but a few key areas are broken (like authentication, API validation, or one bad data model).

At FixMyMess, we treat remediation as two phases: diagnosis, then verified fixes. The audit produces a prioritized list of issues (security, logic, and deployment readiness), plus a short plan that fits a 24-72 hour window. We also include acceptance checks so you can confirm each fix without guessing. There’s a free code audit, and most remediation projects land in a 48-72 hour turnaround once access is in place.

A good handoff isn’t just “here’s the updated repo.” It should be a small, clear package you can trust:

- A written plan ordered by severity and dependencies

- The actual fixes (commits or a delivered codebase) with notes on what changed

- Acceptance checks for each item (how to test, what result to expect)

- A quick deployment runbook (what to set, where to click, and what to watch)

If you’re unsure whether to patch or rebuild, ask for a recommendation that includes time estimates and risk. The right next step is the one that gets you to a stable release without creating a bigger mess a week later.

If you want a second set of eyes on an AI-generated codebase, fixmymess.ai focuses on audits and fast remediation for prototypes built with tools like Lovable, Bolt, v0, Cursor, and Replit. A clear diagnosis plus testable acceptance checks makes the next 48-72 hours feel a lot less chaotic.

FAQ

What is a code audit, in plain terms?

A code audit is a structured review of your code and setup to find security holes, crash points, data risks, and deployment blockers. The output should be a prioritized list of issues with clear next steps, not vague feedback.

Why do AI-generated apps need audits more than normal apps?

Because many AI-generated apps are built to succeed on a single “happy path” and skip the messy real-world cases. Audits often uncover missing server-side validation, fragile auth/session handling, and secrets accidentally shipped with the code.

What should a good audit actually check?

Start with security basics like exposed secrets, authentication, and authorization (who can access what). Then check reliability of the main user flows, database/query safety, and whether the app can be deployed repeatably without relying on someone’s laptop setup.

What are the most common issues found in AI-generated prototypes?

Common red flags are hard-coded API keys, auth that trusts the client, missing ownership checks (so users can access other users’ records), and unsafe query building that can allow SQL injection. These can exist even when the UI looks polished.

What counts as a “stop-ship” issue?

Treat exposed secrets, bypassable auth, cross-user data access, and payment/webhook spoofing risks as stop-ship issues. If a bug could leak data, lose money, or allow unauthorized access, it should be fixed before anything cosmetic.

How do I prioritize the audit findings without getting overwhelmed?

Use simple labels like Critical, High, Medium, and Low, then estimate effort in hours. Fix Critical items first, then anything that unblocks other work (auth, env config, deploy), then the best high-impact fixes that take the least time.

What does a realistic 24–72 hour remediation plan look like?

Aim for a short queue of 5–10 items that fits your timeline. Sequence it as: rotate/remove exposed secrets and lock down access, stabilize the core flows (signup/login/pay/create), fix data layer issues, refactor only the worst hotspots, then confirm deployment is repeatable.

What are acceptance checks, and why do they matter?

Acceptance checks are small pass/fail tests that prove a fix is done, like “user A can’t access user B’s record even if they guess an ID” or “build succeeds with only documented env vars.” Without checks, fixes often become “it works for me” debates.

What mistakes do people make when trying to fix fast?

Treating authentication and authorization as the same thing, polishing UI while security holes remain, and making huge commits without a rollback plan are the big ones. Fast remediation works best with small, focused changes and clear verification steps.

How do I get started with FixMyMess if my AI-built app is breaking?

Gather repo access (or a zip), environment details (local/staging/prod), where secrets live, your top 3 goals, and a short bug list with steps to reproduce. FixMyMess starts with a free code audit, then can remediate most AI-generated projects in 48–72 hours with verified fixes and clear “done” checks.