Estimate remediation scope for an AI-generated codebase: red flags

Use this rubric to estimate remediation scope for an AI-generated codebase by spotting red flags in auth, data model, security, and architecture.

What you’re estimating, and why it matters

Remediation scope is an estimate of what it takes to make a codebase safe, stable, and ready for real users. It covers effort (hours or days), risk (what could break while you fix it), and unknowns (areas you can’t trust until you inspect or test them).

The first real decision usually isn’t “which bug first?” It’s “do we repair what’s here, or rebuild the same features on a cleaner foundation?” Pick the wrong path and you can spend a week patching problems that reappear as soon as you add one more feature.

AI-generated codebases often look great in a demo, but they can be uneven. You might see a polished UI sitting on top of brittle logic. One file follows decent patterns, the next hard-codes secrets, skips input checks, or mixes database queries with UI code. It’s not automatically “bad,” but it can hide sharp edges.

This rubric is designed for speed. You’re trying to answer two early questions:

- Can this be made production-ready with focused repairs, or is it faster and safer to restart?

- Where is the work likely to blow up (auth, data, security, architecture)?

Treat the result as a range, not a single number. A quick, honest scope estimate helps you set expectations and decide whether you need a second opinion before committing to weeks of guessing.

Inputs and a quick, timeboxed approach

To estimate scope, you need just enough information to answer one question: can this code be made safe and reliable without rewriting everything?

Gather the minimum inputs (before you open the code)

Ask for these upfront. If key pieces are missing, your estimate is mostly guesswork.

- Repo access (full source, not a zip of build output)

- Environment setup (env vars, secrets handling approach, and a sample .env template)

- Database details (schema, migrations, and a small data sample if it exists)

- Deployment target (where it must run and any constraints like regions or VPC)

- A short list of “must work” flows (signup, checkout, admin, and so on)

Timebox the first pass. Sixty to 120 minutes is usually enough to spot major risks without slipping into a full audit.

A simple flow works well: spend about 15 minutes getting it running, 30 minutes walking the core user path end to end, then 15 to 45 minutes scanning for big red flags (auth, data model, security, architecture).

What “done” looks like is a one-page result: top issues, a rough effort range, and a clear call (refactor vs rebuild).

Hard stops matter. If you don’t have the source code, can’t run it locally or in a clean environment, or nobody can explain what production data looks like, pause and mark the estimate as blocked.

First-pass triage: can it run, and does it do the core job?

Before you score anything, try to use the app like a real user. You’re not trying to debug every issue. You’re checking whether the codebase is basically alive or failing before it reaches the main workflow.

Start with an end-to-end run: can you start the server, open the app, sign up, log in, and complete the core action (create the thing, book the thing, send the thing)? If any step is blocked by a crash, blank screen, or broken route, scope usually jumps because you’re fixing foundations, not polish.

Take a quick look at configuration. Are environment variables documented? Do you see hard-coded API keys, tokens, or passwords in files or client-side code? Even one exposed secret can turn a “quick fix” into a wider security cleanup.

Also note the testing signal. No tests is common in prototypes, but it increases risk in your estimate. Even a few smoke checks reduce guessing. Meaningful tests around auth and core flows can cut remediation time.

Capture unknowns immediately, because they often drive scope more than visible bugs:

- What step failed, and what error did you see?

- What parts of the core workflow are unclear or inconsistent?

- What data is being created or changed, and where is it stored?

- What must work in production (payments, emails, file uploads)?

Auth red flags that expand scope fast

Auth issues are rarely “just one bug.” They touch routing, data, session storage, and every protected feature. In AI-generated projects, auth is also where copy-paste code and missing server checks tend to show up.

A fast signal is whether the basics work end to end. If password reset emails never arrive, sessions expire at random, or the UI says “logged in” while the API rejects requests, you’re usually looking at more than a quick fix. Those symptoms often mean auth state is split across multiple places (frontend, backend, database, cookies) with no single source of truth.

Red flags to watch for

These patterns tend to expand scope quickly:

- Broken flows (reset links fail, logout doesn’t really log out, sessions die after refresh)

- Missing or inconsistent role checks (a normal user can load admin screens or hit admin endpoints)

- Partial OAuth (callback route errors, tokens stored “somewhere,” unclear refresh flow)

- Authorization enforced only in the UI (pages are hidden, but the server still returns the data)

A simple severity rubric helps keep the estimate honest:

- Low: one flow breaks, but the approach is consistent (a bug)

- Medium: the approach is inconsistent (mixed session and JWT, duplicated logic) and needs a design fix

- High: roles and server-side authorization are unsafe or unclear (rebuild candidate)

Example: if a prototype “protects” admin features by hiding buttons, but you can still call the admin API in the browser, treat it as high severity. Fixing it means defining permissions, enforcing them server-side, and retesting every feature that touches user data.

Data model red flags: where hidden complexity lives

If an AI-generated app “mostly works” but keeps breaking in odd ways, the data model is often the reason. Small-looking schema problems create big scope because every feature depends on the same tables, relationships, and rules.

A fast signal is schema mismatch: the code reads or writes fields that aren’t in the database, or the database has columns nobody uses. You’ll see missing column errors, nulls where the UI assumes a value, or “temporary” JSON blobs that quietly became permanent.

Another scope expander is having no clear source of truth for IDs, relationships, and ownership. An order might reference a user by email in one place, userId in another, and a random “owner” field somewhere else. At that point, fixes aren’t local. You have to decide what the app means, then update code, queries, and existing data.

Duplication and inconsistent naming across tables (users vs user vs app_users) usually means the model grew by copy-paste. It leads to reports that never match, bugs that return after “fixes,” and logic that forks into multiple versions.

A missing migration story is another red flag. If there’s no repeatable way to change schema safely, every change becomes a risky manual edit, and deployments can break production.

High-risk signs that often push estimates up include skipped validation, deletes without constraints, missing tenant boundaries, relationships enforced only in frontend code, and “one table does everything” designs filled with optional fields. If you spot several at once, it may be cheaper to rebuild the data layer cleanly and reconnect the app than to chase bugs table by table.

Security red flags that push you toward a rebuild

Security issues aren’t all equal. Some are “lock the doors” fixes you can add quickly. Others are signs the whole project was built without basic boundaries. In scope estimates, the second category often makes a rebuild safer (and sometimes faster) than patching.

Start with secrets. If API keys or database passwords show up in the repo, logs, or a front-end bundle, treat them as already leaked. Rotating keys is only step one. If the code still needs secrets in the browser to work, that’s a design problem, not a cleanup task.

Injection risk is another scope multiplier. If you see raw SQL pasted into the app, queries built by string concatenation, or “accept anything” inputs, you can’t just fix one endpoint. You need a consistent pattern for validation and database access across the project.

Rebuild-leaning signals often include client-side secrets “because it was easiest,” endpoints missing access checks, queries built from user text in multiple places, session handling that breaks under refresh or timeouts, and no clear boundary between public and private data.

Missing basics like rate limiting, CSRF protection, and reliable authorization checks can be patchable if the architecture is clean. But if every route is a special case, hardening turns into whack-a-mole.

Architecture red flags: refactor pain vs clean restart

Architecture is where prototype shortcuts become time sinks. This is often the deciding factor between a targeted refactor and a clean rebuild.

A common warning sign is “everything is everywhere”: the same business rule appears in a UI component, an API route, and a helper, each slightly different. When data access, request handling, and rendering are mixed together, every change risks breaking multiple places.

Red flags that usually mean refactor pain

Look for patterns that make the code hard to reason about and hard to test:

- Core logic duplicated across files with no clear source of truth

- Database calls sprinkled inside UI or route handlers with no clear data layer

- Multiple ways to do the same task (two auth checks, three billing helpers)

- Slow paths baked in (repeated fetch loops, heavy client-side work, obvious N+1 queries)

- State stored in memory or singletons, assuming a single server

When you see several of these inside the same feature, refactoring often expands. You fix one bug and discover the “real” logic lives somewhere else.

When a rebuild is the kinder option

A rebuild becomes attractive when there are no boundaries worth preserving. A prototype that “works on my machine” but relies on in-memory sessions, mixes database writes into UI events, and repeats the same fetch across components can be patched, but each patch adds more glue.

A practical rule: if you can’t draw a simple box diagram (UI, API, data layer) and point to where each rule lives, scope is likely bigger than it looks.

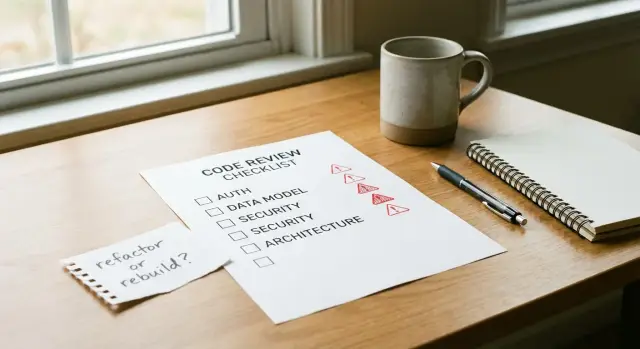

A simple scoring rubric you can apply in 30 to 60 minutes

Use this rubric when you need a fast, defensible way to estimate remediation scope. The goal is to reduce surprise rebuilds.

Step 1: Score four risk areas (1 to 5)

Score each area from 1 (clean, predictable) to 5 (high risk, compounding issues): authentication, data model, security, and architecture.

- 1-2: Mostly works, changes feel local, few surprises

- 3: Works in places but has sharp edges and hidden coupling

- 4-5: Breaks often, fixes create new bugs, hard to reason about

Step 2: Add an “unknowns” score (1 to 5)

Unknowns blow up estimates. Score missing docs, missing tests, flaky repro steps, or a team that can’t explain how core flows work.

Step 3: Separate blockers from irritants

Before you total anything, label findings as blockers or irritants.

Blockers stop production use: users can’t log in, data gets corrupted, secrets are exposed.

Irritants are survivable: inconsistent UI, slow pages, messy folder names.

Step 4: Map the total to an action

Add the five scores (auth + data + security + architecture + unknowns) and use a simple rule of thumb:

- 5-9: Quick fix (tight changes, ship soon)

- 10-14: Refactor (improve structure while keeping core)

- 15-19: Partial rebuild (rebuild one subsystem, keep the rest)

- 20-25: Full rebuild (faster than chasing failures)

Step 5: Turn it into a scope statement

Write three short lines: Fix now, Defer, Out of scope.

Example: “Fix now: login flow, secret handling, database constraints. Defer: admin UI polish. Out of scope: new billing features.”

Common traps that make estimates wrong

A clean UI can hide a shaky foundation. AI-generated apps often look finished because screens are easy to generate, but risky parts live behind them: auth, permissions, data rules, and secret handling.

Another mistake is treating “it works locally” as proof the job is small. Local success can depend on cached sessions, a local database with no real data, missing rate limits, or hard-coded keys that won’t survive a real deploy.

Security is a frequent estimate killer. If you plan to “patch security at the end,” the timeline usually slips because security touches authentication, data access, API design, and storage.

It’s also easy to refactor too early. If you reorganize folders or “clean up architecture” before you understand who owns what data and what each role can do, you can make the code prettier while keeping the core rules broken.

A quick gut-check:

- Don’t use UI polish as a quality signal for backend logic

- Don’t treat local runs as production readiness

- Don’t postpone security decisions

- Don’t refactor before the permission model is clear

- Don’t ignore deployment work

Deployment prep is often underestimated: environment variables, build steps, database migrations, basic logging, and simple monitoring. That work can turn a “two-day fix” into a week.

Quick checklist: the fastest signals of scope

When you need a fast estimate, look for signals that predict rework, not just bugs. If you can’t answer one cleanly, treat it as a scope multiplier.

- Create a brand-new user and complete the core workflow end to end (not just a happy-path demo).

- Search for secrets (API keys, database URLs, JWT secrets) in the repo and in client code.

- Confirm authorization is enforced server-side (not just hidden buttons).

- Check whether migrations run cleanly and tables/fields match what the code expects.

- Explain the architecture in five plain sentences: where requests enter, where auth lives, where business rules live, where data is stored, and how deployments happen.

If signup works but API authorization is missing and secrets are exposed, you’re already in “security hardening plus refactor” territory.

Example: deciding refactor vs rebuild for a prototype that keeps breaking

A non-technical founder brings a Lovable prototype that “mostly works,” except login fails randomly and people get logged out. The goal is to estimate scope without spending days reading every file.

A 30-minute pass can answer four questions: can it run, can a user sign up and log in, is any sensitive data exposed, and does the database match what the app thinks it is?

What we check first

Start by running the app locally (or in its current host) and repeating the login flow a few times: sign up, log out, log back in. Then trace auth end to end: frontend form, API route, session or token creation, cookie settings, middleware.

Next, scan for exposed secrets (API keys in the repo, hard-coded JWT secrets, public database URLs) and verify the schema: tables, relations, migrations, and types (especially user, org, permissions).

Here’s a sample score (0 = fine, 3 = severe):

| Area | Score | Why |

|---|---|---|

| Auth | 3 | Mixed auth patterns (cookies + localStorage), missing refresh flow |

| Data model | 2 | User table exists, but roles are duplicated across tables |

| Security | 3 | Secrets committed, no input validation on key endpoints |

| Architecture | 2 | Business logic spread across UI and API routes |

Total: 10/12. That usually points to rebuilding the foundation (auth + data access) while reusing UI screens and copy.

Immediate fixes (do now): replace auth with one consistent approach, rotate and remove exposed secrets, add basic validation and protect high-risk endpoints, make migrations reproducible, and add minimal logging around login and user creation.

After the app is stable, defer structural cleanup (folder naming, deeper refactors), performance tuning, and nice-to-have admin tools.

The surprise is often hidden coupling: “quick” login bugs caused by schema drift or two different user IDs.

Next steps: turn the rubric into a clear plan

Turn your score into a one-page scope you can share with a teammate, founder, or client. Keep it simple: what is broken, what is risky, and what you will do first.

Include:

- The top 3 risks (broken auth flow, unclear data model, exposed secrets)

- The top 5 fixes (written as outcomes, not tasks)

- Your call: staged refactor or rebuild

- The first milestone you can ship safely

- What you’re not touching yet (to protect the timeline)

If your score is high, plan a rebuild, but keep it small. Define a minimal production-ready core: one happy path, real authentication, a clean database schema, and basic logging. Add features after the foundation is stable.

If your score lands in the middle, choose a staged refactor. Start with security and authentication because they affect everything else and are hard to patch later. Then tackle the data model, and only then do architecture cleanup.

If you inherited an AI-generated app and you’re buried in unknowns, getting an external diagnosis can be the fastest way to stop guessing. FixMyMess (fixmymess.ai) focuses on taking AI-generated prototypes and making them production-ready through codebase diagnosis, logic repair, security hardening, refactoring, and deployment preparation.

FAQ

What’s the fastest way to estimate scope without reading the whole codebase?

Start by answering one thing: can a new user complete the core workflow end to end without crashes or confusing dead ends. Then scan for the scope multipliers—broken auth, schema drift, exposed secrets, and “everything mixed together” code. You’re not trying to fix anything yet; you’re trying to predict whether fixes will stay fixed.

When should I stop and mark the estimate as blocked?

If you can’t run it in a clean environment, you can’t trust any estimate. Missing repo access, undocumented environment variables, unclear database setup, or no idea what “production data” looks like should be treated as blockers. In practice, you pause and label the estimate as blocked until those basics are provided.

How do I decide between repairing the existing app and rebuilding it?

Repairs make sense when problems are mostly local: one broken flow, a few inconsistent checks, or missing validation that can be added in a consistent way. A rebuild is usually faster when core boundaries are missing—auth is inconsistent, permissions aren’t enforced server-side, secrets are required in the browser, and the data model doesn’t match what the app thinks it is. If fixing one bug keeps creating two new ones, you’re already paying “rebuild tax.”

Why do authentication problems expand scope so quickly?

Because auth touches almost every screen and API call. A small symptom—random logouts, reset emails not arriving, UI saying “logged in” while the API rejects requests—often means state is split across multiple places with no single source of truth. That usually turns into a design fix plus retesting many features, not a one-line patch.

What’s the simplest way to tell if authorization is actually safe?

UI-only protection is not protection. If a user can still hit an admin endpoint or fetch private data by calling the API directly, the issue is high severity. The fix is to define permissions clearly, enforce them on the server for every relevant route, and verify data ownership rules so you don’t leak or corrupt user data.

What are the quickest data model red flags to check?

Look for mismatch and ambiguity: code reading fields that don’t exist, tables with unused columns, multiple ways to represent ownership (email in one place, userId in another), or roles duplicated across tables. These create “haunted” bugs where things break in odd ways because the app isn’t sure what the truth is. Fixing it often requires choosing a clean source of truth and updating code and data together.

If I find committed secrets, is it just a quick cleanup?

If you see API keys, database passwords, or JWT secrets in the repo or front-end bundle, assume they’re already leaked. Rotating keys is necessary, but it’s not the whole fix if the design still needs secrets in the browser. Treat this as both a security incident and a scope signal that the architecture may need changes, not just cleanup.

What security issues usually mean I need broader fixes, not patches?

When the app builds queries by string concatenation, accepts unvalidated input, or has raw SQL scattered across multiple endpoints, you can’t safely patch one route and stop. You need one consistent pattern for validation and database access so you don’t miss a similar flaw elsewhere. The more places you see the pattern, the more the estimate should include systemic changes and retesting.

What architecture signals predict refactor pain?

It often shows up as “everything is everywhere”: business rules duplicated across UI and API, database calls inside UI components, multiple ways to do the same thing, and state stored in memory as if there will only ever be one server. In that setup, changes don’t stay local, and refactors balloon because you keep discovering hidden dependencies. If you can’t explain where auth, business rules, and data access live in plain terms, your scope should include boundary cleanup or a partial rebuild.

How should I present a scope estimate so it doesn’t turn into weeks of guessing?

Use a range and make the uncertainty explicit. Write three short buckets: what you’ll fix first to make it safe, what you’ll defer, and what’s out of scope so the timeline doesn’t explode. If you inherited an AI-generated prototype and the unknowns are piling up, FixMyMess can run a free code audit and give a clear repair-versus-rebuild call, with most remediation finished in 48–72 hours after the audit.