OpenAPI-first APIs for messy prototypes: stay in sync

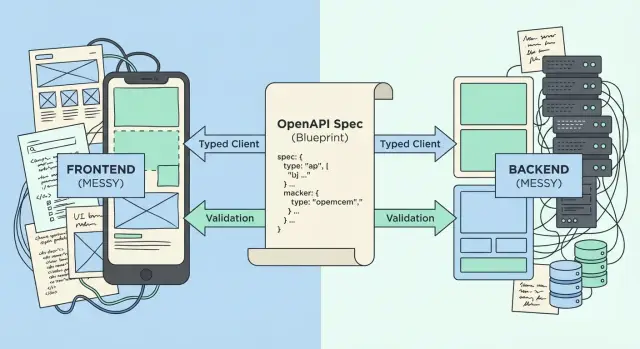

OpenAPI-first APIs help you turn a messy prototype into a reliable contract: generate typed clients, validate requests, and keep frontend and backend aligned.

Why prototypes fall apart when the API is undefined

Most prototypes start with speed: one screen, one endpoint, one quick change. Then the API keeps shifting without a clear record of what it should accept and return. Endpoints get renamed, query params appear and disappear, and response shapes change depending on who last touched the code.

The frontend usually fills the gaps by guessing. Someone hardcodes a field name that worked yesterday. Someone else adds one more optional parameter. The backend quietly starts returning a different error format. Nothing looks broken in isolation, but the system gets fragile fast.

Small mismatches turn into big bugs because they hide until the worst time. A required field flips to optional and checkout breaks. A response changes from userId to id and the UI shows blanks. A boolean becomes a string and validation passes in one place but fails in another. Auth headers differ across endpoints and users get random logouts. Error responses vary, so the UI can’t show the right message.

A “single source of truth” fixes this day to day: one agreed description of each endpoint (path, method, params, request body, response shape, error format) that both frontend and backend follow. When that source changes, everyone sees it and updates together. That’s the promise behind OpenAPI-first APIs.

You don’t need a full rewrite to get there. Even a messy AI-generated prototype can improve step by step: document the endpoints people actually use, lock down the shapes that matter, then fix code to match.

What “OpenAPI-first” actually means

OpenAPI is a written contract for your API. It’s usually a YAML or JSON file that describes endpoints, inputs, and outputs in a way humans and tools can read. Instead of relying on “it worked yesterday,” you can point to one document and say: this is the truth.

OpenAPI-first means the contract is decided first, then the code follows it.

With “spec last,” teams build endpoints quickly, the frontend guesses shapes, and everyone patches things when they break. Later, someone documents what exists, but the docs lag behind and drift becomes normal.

With “spec first,” you agree on behaviors up front, even if the backend is still messy. The spec becomes the plan: what the frontend should call, what the backend must return, and what happens when things go wrong.

A useful spec makes four things unambiguous:

- Endpoints and methods: paths, HTTP verbs, parameters, and a couple of examples.

- Auth rules: how users authenticate, which headers or tokens are required, and what happens when auth fails.

- Data schemas: exact fields for requests and responses (types, required vs optional, formats).

- Errors: standard error shapes and status codes, so failures are predictable.

The practical benefit is alignment. Backend implements to the spec. Frontend can generate types and calls that match. QA tests against it. Product can read it and confirm the API supports real user flows.

This matters even more for AI-built prototypes where endpoints “kind of” exist but aren’t consistent. One route returns { user: ... }, another returns { data: { user: ... } }, and errors might be plain text in one place and JSON in another. Writing one consistent contract forces those decisions into the open and stops the guessing.

How to create your first OpenAPI spec from a messy prototype

Messy prototypes usually have an API, just not a written one. Your first spec should describe what the system does today, then get more consistent with each pass. The goal is progress, not perfection.

Start by collecting evidence from what already exists: backend routes, frontend network calls, server logs, and any notes or screenshots. In AI-generated codebases (from tools like Bolt, v0, Cursor, or Replit), you’ll often find endpoints that work for one screen and break in real use: missing auth checks, odd field names, inconsistent errors. Capture real requests and responses, even if they’re ugly.

Pick one narrow workflow first so you don’t boil the ocean. A good slice is something the business depends on, like “sign up + create a project” or “checkout + payment confirmation.” If you can describe one flow end to end, you’ll have a spec you can use immediately.

Before writing endpoints, set a few simple rules that prevent future chaos. Decide on path style (like /projects/{projectId}), naming style (camelCase or snake_case), one pagination pattern, one shared error shape, and clear auth requirements per endpoint.

Now write version 0.1 of the spec, even if it’s incomplete. Document the fields you’re confident about, and use descriptions to flag uncertainty. The biggest win is making request bodies and responses explicit, because that’s where frontend guessing happens.

A practical approach: take one real response from logs, use it to draft a schema, then remove fields you don’t want to guarantee long term.

Finally, do a quick review with the people touching both sides: whoever owns the frontend calls and whoever owns the backend handlers. A short pass often catches mismatched status codes, missing required fields, and naming confusion.

Generate typed clients so the frontend stops guessing

A typed client is a small library generated from your OpenAPI spec that already knows your endpoints, parameters, and response shapes. Instead of the frontend guessing what the backend returns (and getting surprised in production), your editor can warn you as soon as you use the API the wrong way.

In practice, this replaces hand-written fetch calls with a repeatable flow: generate the client from the spec, import the generated functions and types, and update the UI to use them. Then fix type errors immediately instead of debugging 400s later.

Typing catches common problems early: missing required fields, wrong enum values, and mismatched names (snake_case vs camelCase). It also helps with tricky data like dates. If the API says a field is a date-time string, you can handle it consistently instead of converting it differently across the UI.

Types aren’t enough on their own. Real apps still need runtime behavior: timeouts so the UI doesn’t hang, retries for flaky networks, and auth token injection so every request is signed the same way. A solid pattern is to configure one client instance with these rules, then use it everywhere.

One rule should be non-negotiable: client generation is a build step, not a one-off. If the spec changes, the client must regenerate in the same commit, or drift returns.

Validate requests and responses to catch drift early

Typed code helps you write the right shapes, but it can’t protect your API when real traffic hits it. Type checks happen at build time. Runtime validation happens when the server receives a request or sends a response. Without it, clients can send missing fields, wrong formats, or extra data and the backend might accept it by accident.

With OpenAPI-first APIs, the spec is the contract. Runtime validation is how you enforce that contract every day, even when code changes quickly.

Validate incoming requests (and fail fast)

Start by checking basics on every endpoint. You want clear errors instead of silent coercion: required fields, formats (emails, UUIDs, dates, URLs), allowed values (enums), and how you handle unexpected fields (reject or strip). Put sane limits on payload size for safety.

A common messy-prototype bug: the frontend sends userId as a number in one place and a string in another. Types might not catch it if parts of the app were generated or edited separately. Runtime validation catches it on the first bad request and tells you exactly what failed.

Validate outgoing responses (so the backend can’t drift)

Response validation is where drift hides. A backend refactor changes totalCount to total, or starts returning null where the spec says string. The UI breaks in ways that feel random.

Validating responses forces the server to stay honest: if it returns something outside the spec, you see it immediately in logs and tests.

Also standardize error responses so the UI can handle failures without special cases. Keep one shape everywhere: a stable code (machine-friendly), a short message (human-friendly), optional details (field errors), and a request traceId for support.

Keep frontend and backend aligned during changes

When an API changes, the biggest risk isn’t the change itself. It’s the quiet mismatch: the frontend keeps sending the old shape, the backend starts expecting the new one, and the bug only shows up in production.

Treat the spec like the handshake between teams. If the handshake changes, everyone updates their grip before moving on.

A simple workflow prevents drift:

- Update the OpenAPI spec first (request, response, errors).

- Regenerate the typed client and types.

- Implement the backend change to match the spec.

- Update the frontend using the regenerated types.

- Run validation and tests before merging.

That order forces disagreements to show up early, when the change is still small.

Breaking changes will happen. The problem is breaking changes with no warning. If you need to rename a field, change a required parameter, or alter behavior, consider shipping a new version and keeping the old one alive briefly so the frontend has a safe window to migrate. For endpoints and fields that are going away, mark them deprecated in the spec and track a real removal date, otherwise deprecations tend to linger forever.

A short “done” checklist helps avoid half-finished changes:

- Spec updated (including error cases).

- Typed client regenerated and committed.

- Backward compatibility checked (or version bumped).

- Deprecated items marked and tracked.

- QA tests updated from the spec.

Common mistakes that make OpenAPI-first fail

OpenAPI-first APIs work when the spec is treated like a real product artifact, not a file that sits in a repo. Most failures happen when teams adopt the tooling (generators, docs, mocks) but avoid the hard part: agreeing on a clear contract and enforcing it.

One common trap is mixing styles. You start with neat paths, then add one endpoint that accepts a loose blob of JSON because the prototype needs it today. A week later, half the API is clean and the other half is ad hoc, so typed clients either break or end up full of any.

Another issue is skipping error cases. If the spec only describes happy paths, the UI has no reliable way to handle failures. You end up with brittle logic like “if message includes…” and a pile of one-off UI states.

Auth is also often left vague. Prototypes commonly have a hardcoded bypass, a token pasted into local storage, or a half-finished OAuth flow. If the spec doesn’t spell out security schemes and required headers early, frontend and backend build different assumptions and debugging turns into guesswork.

Client generation can become a one-time event, too. Teams generate a typed client once, then keep changing the backend without regenerating. The client stops matching reality, and developers start working around type errors instead of fixing the contract.

Finally, many teams treat the spec as documentation only. If servers don’t validate requests and responses against it (even in dev or staging), the spec becomes a wish list. The real contract becomes whatever the backend returns today.

A simple smell test: if a frontend dev says “I’ll just try it and see what comes back,” you’re not using the spec as a contract.

Quick checks before you call the API “stable”

“Stable” doesn’t mean “perfect.” It means people can build on it without guessing, and changes are deliberate instead of accidental.

Start with a basic question: is there exactly one spec that everyone treats as the truth? If the backend has one file, the frontend has another, and a contractor has a third copy in a chat thread, you don’t have a stable API. You have three opinions.

Next, check coverage. A spec can be well written and still useless if it skips the core journey. Pick one main flow (sign up, log in, create the main object, list it, update it) and confirm every call in that flow is documented.

These checks catch most “it worked on my machine” problems:

- One shared OpenAPI spec file is used for code generation and review, not copied around.

- Schemas clearly mark what is required, what can be null, and what can be omitted.

- Errors are predictable: same shape, same naming, and status codes that match reality.

- You can regenerate typed clients and run request/response validation in one repeatable command.

- The spec matches production behavior for the top endpoints, not just local tests.

Watch out for “optional” becoming a junk drawer. If a field is truly optional, say what happens when it’s missing. If it’s required for the screen to work, mark it required.

One example that shows up in prototypes: returning 200 with { "error": "Not logged in" } when auth fails. It feels convenient, but it breaks typed client generation and pushes error handling into random frontend checks. A stable API returns a clear 401 with a consistent error object.

A realistic example: cleaning up an AI-built prototype API

Picture a prototype generated by an AI tool: it has a login page, a profile screen, and a payments screen. It looks fine in a demo, but the moment you try real users, the cracks show.

The biggest issue is mismatch. The frontend expects fields the backend never sends, because both sides were built by copying guesses. The profile UI renders displayName and plan, but the backend returns name and never returns subscription data. Meanwhile, the payments screen sends { amount: "20", currency: "usd" }, but the server only accepts an integer amountCents and rejects lowercase currency codes.

OpenAPI-first APIs fix this by making one clear contract, then making both sides obey it.

Start small: define just the endpoints the app uses today, and include both success and error responses so the UI can handle failures without guessing. Even a tiny spec forces important decisions: Is plan required? Is it free | pro? What does “not logged in” look like in JSON?

Once the spec exists, generate a typed client and replace handwritten fetch() calls. If the UI tries to read displayName but the spec says name, it fails at build time instead of failing for users.

Then add request validation on the backend. When the payments screen sends { amount: "20" } instead of { amountCents: 2000 }, the server rejects it immediately with a readable 400 error, not a vague crash later.

Next steps: turn the spec into a calmer development process

You don’t need to rewrite your whole system to get value from OpenAPI-first APIs. The fastest win is to pick one workflow users hit every day and make it predictable end to end.

Choose a single, high-traffic flow and ship a spec for it this week. Good candidates are login, checkout, or “create item” plus “list items.” Keep the first version small but complete: clear request bodies, clear responses, and real error cases.

Then use the spec to keep drift from sneaking back in: regenerate the typed client for that slice, validate requests and responses for the same endpoints, and make “no API change without a spec change” a habit.

If the codebase is already tangled (broken auth, exposed secrets, spaghetti endpoints, security holes), a fast audit helps you avoid standardizing a bad contract. FixMyMess (fixmymess.ai) focuses on repairing AI-generated apps and can help turn a drifting prototype into a production-ready API by locking down the contract, fixing the code to match, and hardening the riskiest areas.

FAQ

What does “OpenAPI-first” mean in plain terms?

An OpenAPI-first approach means you agree on an API contract (endpoints, inputs, outputs, and errors) before you rely on the code’s current behavior. The backend and frontend both treat the spec as the truth, so changes are deliberate and visible instead of accidental drift.

Why do AI-generated prototypes fall apart when the API isn’t defined?

Prototypes break when the frontend starts guessing response shapes and the backend changes behavior without a shared record. Small mismatches like renamed fields, inconsistent auth headers, or different error formats compound until core flows fail in production.

How do I start an OpenAPI spec when my API is already messy?

Start with one workflow users actually rely on, like “sign up + create project” or “checkout + confirmation.” Capture real requests and responses from network logs, write a minimal spec for just those endpoints, then tighten schemas and error formats as you fix the code.

What should I include in version 0.1 of the spec?

A useful first version makes the request body, response shape, auth requirements, and error shape explicit for the endpoints you’re using today. You can leave unknowns noted in descriptions, but avoid “anything goes” schemas that force the frontend back into guessing.

How do I handle field name mismatches like `userId` vs `id`?

Pick one naming convention and stick to it everywhere, then update either the backend or the client to match. If you can’t change backend outputs immediately, reflect reality in the spec for now and plan a controlled migration so you don’t break the UI silently.

What is a typed client, and why is it worth generating?

A typed client is generated code that knows your endpoints and data types from the spec, so your editor and build can catch mismatches early. It reduces hand-written fetch() guesswork and makes API changes show up as type errors instead of production bugs.

Do I still need runtime validation if I have TypeScript types?

No, types help at build time, but they don’t protect your server from real traffic sending bad payloads. Runtime validation checks incoming requests and outgoing responses against the spec so drift and invalid data get caught immediately with clear errors.

What’s the simplest way to standardize API errors?

Use one consistent error object across endpoints, with a stable machine-readable code, a short message, and optional field-level details. That consistency lets the UI show the right message without fragile parsing like checking whether text “includes” something.

What’s a safe workflow for changing an API without breaking the frontend?

Update the spec first, regenerate the typed client in the same change, then implement backend updates to match and fix the frontend using the regenerated types. If you must make a breaking change, version it or provide a short migration window so you don’t surprise production users.

When should I get help fixing an AI-generated API instead of patching it myself?

If your prototype has broken auth, exposed secrets, inconsistent endpoints, or unclear data shapes, standardizing a spec can lock in bad behavior. FixMyMess can run a free code audit and then quickly repair AI-generated code, align it to a clear OpenAPI contract, and harden the risky parts so the app is ready for production.