Webhook replay protection: stop duplicates with confidence

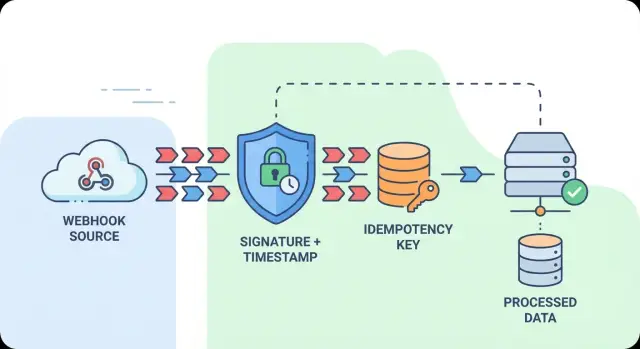

Webhook replay protection stops duplicate charges and actions by verifying signatures, enforcing timestamp windows, and storing idempotency keys safely.

Why duplicate webhooks happen and why it matters

A webhook is a message a service sends to your app (usually an HTTP request) to tell you an event happened, like a payment succeeded or a subscription was canceled.

The catch is that “sent” doesn’t mean “processed once.” Most providers deliver webhooks with an “at least once” guarantee. If your endpoint times out, returns a 5xx, or the response gets lost on the way back, the provider retries. Those retries are accidental duplicates: the same real-world event delivered more than once.

Replays are different. A replay attack is when someone captures a valid webhook request and sends it again later to trigger the same effect twice. The request can look legitimate, so without replay protection you can accept an old event as if it were new.

If duplicates or replays get treated as fresh events, the results are painfully real: double charges or refunds, duplicate emails, inventory decremented twice, duplicate subscriptions or invoices, and analytics that no longer match what actually happened.

The goal is simple to say and easy to get wrong: accept each valid event once, and ignore repeats safely. “Safely” matters because legitimate retries are normal, while tampered requests and stale replays should be rejected.

A good handler treats every incoming webhook as untrusted until proven otherwise. It verifies who sent it, checks that it’s fresh enough to be believable, and records a stable “already processed” marker so the second delivery becomes a no-op.

Common sources of duplicates and replays

Duplicate deliveries are expected behavior. Even when everything is working, the same event can arrive again.

The most common cause is retries after a timeout or a 5xx response. You may have finished processing, but the provider didn’t get a clear success signal. Network glitches create the same outcome: the request succeeds, but the response is dropped or a proxy resets the connection.

Duplicates can also come from your own system and still look like “webhook problems” when you review outcomes. A user double-clicks a button, an automation loops, or a queue redelivers after a worker crash.

Typical sources include provider retries, manual resend actions in dashboards, response loss after successful processing, queue redelivery, and integration loops.

Replays are the more worrying cousin. An attacker (or a buggy client) can resend an old request that was valid before. Without replay protection, that can repeat actions like granting access, changing account state, or generating invoices.

A simple failure case: your handler creates an invoice on payment_succeeded. If the event is retried three times, or replayed a day later, you can end up with multiple invoices unless you verify signatures, enforce a timestamp window, and dedupe by an idempotency key.

Signature validation: your first line of defense

Signature validation blocks most spoofed webhooks before they touch your system. A valid signature proves the payload wasn’t modified in transit (integrity) and that it was produced by someone who knows the shared secret (authenticity).

This is an important part of replay protection because it stops random third parties from inventing events. It does not, by itself, stop a legitimate sender from delivering the same event twice. It just ensures you only handle real messages.

Where teams get burned is trusting the presence of a header instead of verifying it. A request with X-Signature: abc123... means nothing unless you recompute the signature on your side using the raw request body and your secret.

A solid verification flow is:

- Reject immediately if the signature header is missing.

- Read the raw body bytes (not a parsed JSON object).

- Compute the expected HMAC (or whatever the provider specifies) over the exact bytes.

- Compare using a constant-time function.

- Only then parse JSON and do any database work.

Constant-time comparison is worth doing because normal string comparisons can leak information through timing differences.

Also, fail fast. If you validate the signature after parsing large payloads, you hand attackers an easy way to burn CPU. Treat missing or invalid signatures like a locked door: stop early, log minimal details, and return an error without doing extra work.

Timestamp windows: make replays expire

A timestamp window limits how long a captured webhook request stays useful. It works best when the sender includes a timestamp and signs it along with the body.

The flow is straightforward: verify the signature, then check that the timestamp is recent enough. If someone resends the exact same request hours later, it fails the freshness check.

How to choose the window

Many teams start with a small allowed skew, like 5 minutes. That’s usually enough for normal network delay and queueing without letting old messages through.

A practical approach:

- Extract the timestamp from the provider’s header (or payload if that’s their format).

- Verify the signature using the same timestamp value as part of the signed input.

- Compare it to your server time and accept only within the skew window.

- Outside the window, treat it as a replay even if the signature is valid.

Clock drift is the quiet failure mode. If your server time is off, you’ll reject good events. Keep hosts time-synced and compare against server time, not client time.

For late events, decide ahead of time whether you hard reject them or route them to manual review. If your provider occasionally delivers delayed webhooks, logging and reviewing can be safer than processing them automatically outside your window.

Idempotency keys: how dedupe works in practice

An idempotency key is a “do this once” label for a webhook event. When the same event arrives again, you look up the key and return the same outcome instead of running your business logic twice.

The key must be stable across retries. If the provider gives you an event_id or message_id, use that. If not, build one from fields that won’t change between deliveries, such as a hash of provider name + event type + resource id + provider timestamp. Don’t use your own receive time or random UUIDs, because duplicates will never match.

Scope matters too. If you support multiple customers, include the tenant identifier in the stored key so one customer can’t block another by accident.

A simple model is to store one row per idempotency key with a status and (optionally) a compact outcome:

- in-progress (accepted, work not finished)

- processed (completed, duplicates can return success immediately)

- failed (finished with an error, you may choose to retry)

Keep keys long enough to match your risk. If money moves, keep them longer (days or weeks). If impact is low, shorter retention may be fine.

One practical safeguard: enforce uniqueness at the database level. A unique constraint turns concurrency into “first writer wins,” even if two copies arrive at the same time.

Idempotent storage: the simplest reliable pattern

If you want dedupe that holds up under retries, timeouts, and parallel requests, store idempotency in your database. Memory caches expire. In-process locks break when you scale. A database unique constraint is boring, fast, and hard to bypass.

Pick a stable idempotency key (often the provider’s event ID, or a hash of tenant + event ID). Create a table like webhook_receipts with a UNIQUE constraint on that key.

Insert first, then process

The safest flow is to write a receipt before doing real work. Two requests can’t both “win.” One insert succeeds, the other fails, and the duplicate becomes a no-op.

A reliable pattern:

- Validate signature and timestamp, then compute the idempotency key.

- Try to insert a receipt row with status

received. - If the insert fails due to the unique constraint, treat it as a duplicate and return a safe 2xx.

- If the insert succeeds, run business logic, then update the receipt to

processed(orfailed).

Returning 2xx on duplicates sounds odd, but it’s usually correct. The sender is asking “Did you get it?” and you did. Re-processing is the risky part.

Store a minimal receipt

Keep the receipt small but useful: idempotency_key, tenant_id, event_type, received_at, processed_at, status, and maybe a short result field like “created invoice 123.” This also gives you an audit trail when you need to explain why something happened.

Step-by-step: build a safe webhook handler

Reliability and replay protection are the same job: accept an event once, and only once, even if it’s delivered many times.

A request flow that holds up under retries

Keep the hot path short and split it into stages:

- Verify before parsing JSON. Read the raw request body, validate the signature, and check a timestamp window. If it fails, return 4xx.

- Parse and validate the schema. Decode JSON and confirm required fields (event id, type, tenant/account).

- Compute an idempotency key. Prefer the provider’s event id. If missing, build a stable key.

- Record the key with a unique write. If it already exists, return 2xx immediately. If it’s new, only continue after the write succeeds.

- Do the business work off the edge. Enqueue a job with the event payload (or a reference). Dedupe at the webhook entrypoint, not inside the worker.

After you return 2xx, you can safely do slower actions like calling payment APIs, sending emails, or updating your database.

For troubleshooting, attach a correlation set to logs: request id, event id (idempotency key), tenant id, event type, and the decision (accepted vs duplicate). If a customer reports a double charge, you can trace one event across retries quickly.

Ordering, concurrency, and multi-tenant edge cases

Webhooks aren’t a queue. You might receive event B before event A, or get the same event twice at the same time. If your code assumes clean ordering, you’ll eventually overwrite good data with older data or apply side effects twice.

Out-of-order delivery: accept it

Design handlers to be safe even when events arrive late. For update-style events, only apply changes if they’re newer than what you already stored. “Newer” might be a version number, a sequence, or an updated_at timestamp provided by the sender. If you don’t have any of those, keep your own “last processed” marker per object and treat older updates as no-ops.

Also, don’t treat creates as special. If you process an “update” before a “create,” your handler should upsert the record and later ignore the stale create.

Concurrency: the same event can hit twice at once

Deduplication must be race-safe. Two requests can both pass a “have I seen this?” check before either writes the answer.

The database unique constraint is the cleanest fix. Insert the dedupe record first, then do the work, then mark it done. If the work is long, store status (received, processing, succeeded, failed) and only retry safely when a prior attempt clearly failed or timed out.

Multi-tenant keys: avoid cross-customer collisions

If you serve multiple tenants, include the tenant identifier in your dedupe key. Otherwise two customers could share the same event_id and block each other.

A practical key format is tenant_id + provider + event_id (or tenant_id + provider + object_id + version).

Partial failures matter too. If you charge a card but crash before marking the event succeeded, a retry can charge again unless you record what already happened.

Common mistakes that cause double processing

Most duplicate processing problems are predictable, not random.

Verifying the signature too late is a classic one. If you write to the database, send email, or charge a card and only then check the signature, a forged or replayed request can still do damage. Validation has to happen before any side effect.

Another frequent issue is reading the request body the wrong way. Some frameworks parse JSON and then re-serialize it (changing whitespace, field order, or encoding). If your provider’s signature is computed over the raw bytes, verification will fail if you validate against a modified body. Don’t “temporarily accept” failed signatures to keep the system running. That turns signature checks into theater.

Other patterns that show up often:

- Deduping based on timestamps alone. Two real events can share a timestamp, and an attacker can copy one.

- Returning 500 for duplicates. The sender sees an error and retries harder, creating a retry storm.

- Treating “already processed” as an exception instead of a normal outcome.

- Logging secrets or embedding them in client-side code.

If you detect the same event ID twice, respond with a 2xx and do nothing else. That’s usually the safest way to stop retries.

A simple real-world example: preventing duplicate charges

A common support nightmare: a customer says they were charged twice. Your payment provider sends a payment_succeeded webhook, your server creates an order, and then a retry or replay hits the same endpoint again. If your handler runs fulfillment or billing logic twice, you now have a real mess.

Replay protection helps in layers. Signature verification ensures only your provider can send valid events. A timestamp window limits how long a captured request remains usable. But legitimate retries are still normal, and that’s where dedupe via an idempotency key matters most.

A clean pattern is:

- Extract the provider’s event ID (or build one from stable fields).

- Use it as an idempotency key, like

provider:event_id:account_id. - Insert the key into storage with a unique constraint.

- If insert succeeds, process the order.

- If it already exists, return 200 and do nothing.

What the customer sees: one charge, one receipt, and a consistent order status even if the provider retries five times.

Checklist and next steps

If you want webhook replay protection that holds up in production, focus on a few non-negotiables:

- Verify the signature before any business logic (and before logging fields you don’t fully trust).

- Reject requests with timestamps outside your allowed window, and keep your servers time-synced.

- Deduplicate with a unique idempotency key stored atomically.

- Return consistent 2xx responses for duplicates so the sender stops retrying.

- Log safely: no secrets, and include correlation fields (event ID, request ID, tenant ID) for tracing.

A quick test that catches most mistakes: send the exact same webhook payload five times in a row, then send it again after your timestamp window expires. You should see one business action, several fast “already processed” responses, and a clean rejection once it’s too old.

If you inherited an AI-generated webhook handler that’s double-processing under retries, it’s often a small set of fixes: raw-body signature verification, a timestamp window, and database-backed idempotency. If you want a second set of eyes, FixMyMess (fixmymess.ai) can run a free code audit to pinpoint where verification, security hardening, and dedupe are breaking down before you ship more changes.

FAQ

Why am I receiving the same webhook multiple times?

Most webhook providers only guarantee at least once delivery. If your endpoint times out, returns a 5xx, or the response gets lost, the provider retries the same event. Those retries are normal and you should design your handler to safely do nothing on repeats.

What’s the difference between webhook duplicates and replay attacks?

A duplicate is usually a legitimate retry of the same real event, caused by timeouts, errors, or response loss. A replay is when an old, previously valid request is sent again later to trigger the same effect twice. You should accept legitimate retries safely, but reject stale replays by enforcing freshness and deduping by a stable event key.

If I verify the signature, do I still need idempotency?

A signature proves the payload wasn’t changed and that the sender knows your shared secret, which blocks most spoofed requests. It does not stop the same valid event from being delivered multiple times, because retries can still carry a correct signature. Signature checks are necessary, but dedupe is what prevents double processing.

How do I validate webhook signatures correctly without false failures?

Validate against the raw request body bytes exactly as received, then compute the expected HMAC (or provider-specific algorithm) and compare using a constant-time function. If you verify a parsed or re-serialized JSON body, tiny formatting changes can break verification and tempt teams to “temporarily accept” invalid signatures, which is dangerous.

What timestamp window should I use to block replays?

Use a small skew window as a default, often around 5 minutes, so normal network delays still pass but captured requests expire quickly. The timestamp must be part of what’s signed; otherwise an attacker can change it. Keep your servers time-synced, because clock drift is a common reason good events get rejected.

What should I use as an idempotency key for webhook dedupe?

Start with the provider’s event_id or message_id if it exists, because it stays the same across retries. If you must build your own, derive it from stable fields like provider name, event type, resource ID, tenant ID, and a provider timestamp, often hashed together. Don’t use your own receive time or a random UUID, because duplicates won’t match.

Why is database-backed dedupe better than using a cache or in-memory lock?

A database write with a unique constraint is the most reliable way to make dedupe race-safe across multiple servers and parallel requests. The common pattern is “insert receipt first, then process,” so only one request wins and the others become no-ops. In-memory locks and caches tend to fail when you scale or restart.

Should I return 200 or an error when I detect a duplicate webhook?

For duplicates, return a consistent 2xx once you’ve confirmed you’ve already processed that event, so the provider stops retrying and you avoid a retry storm. For invalid signatures or timestamps outside your window, return a 4xx and do no work. The key idea is to avoid side effects unless the request is both authentic and new enough.

How do I handle out-of-order webhooks and concurrent deliveries?

Assume events can arrive out of order and design handlers to be safe when they do. Apply updates only if they’re newer than what you have (using a provider version, sequence, or updated_at when available), and prefer upserts so “update before create” doesn’t break you. Separately, make dedupe race-safe with a unique idempotency key so two identical deliveries can’t both run.

How do I stop webhook retries from causing double charges or duplicate invoices?

A common cause is processing the webhook twice because there’s no idempotency guard at the entrypoint, or because a crash happens after charging but before recording success. Check your logs for the same provider event ID appearing multiple times, and verify you’re inserting an idempotency receipt before side effects. If you inherited an AI-generated webhook handler that’s double-processing, FixMyMess can run a free code audit and apply the typical fixes—raw-body signature verification, timestamp windows, and database-backed idempotency—so retries become safe no-ops.